There is a controller in nearly every modern Kubernetes cluster that operators rarely think about. ExternalDNS watches the cluster's Ingress, Service, Gateway, and HTTPRoute objects, and, when one of them claims a hostname, it reconciles the corresponding A / AAAA / CNAME record in the cloud DNS provider — Route 53, Cloud DNS, Azure DNS, DigitalOcean, Cloudflare, and a few dozen others. To make sure two clusters do not fight over the same record, ExternalDNS also writes a small TXT record next to each managed record containing a registry marker: heritage=external-dns,external-dns/owner=<owner-id>,external-dns/resource=<KIND>/<NAMESPACE>/<RESOURCE-NAME>. The owner ID is meant to identify this cluster so a different cluster's controller will see the marker and step away. The resource path tells the controller which Kubernetes object the record belongs to so it knows when to delete it.

The marker is not a secret. ExternalDNS publishes it in the cloud-DNS zone the operator already controls; it has the same visibility as the A record next to it. But it is also, in DNS engineering terms, a self-incrementing inventory of every Kubernetes cluster on the public Internet that uses ExternalDNS with the default TXT registry. Anyone with dig and a hostname list can read it.

A second Kubernetes signal lives in the CNAME chain of every K8s-fronted hostname. When a cluster operator creates an Ingress and the cloud's load-balancer controller provisions a managed LB, the LB's DNS name is conventional and recognisable: k8s-<namespace>-<name>-<hash>.elb.<region>.amazonaws.com for the AWS Load Balancer Controller's default name format, k8s-<...>.<region>.cloudapp.azure.com for AKS, *.gke.goog for GKE, *.k8s.ondigitalocean.com for DOKS, *.openshiftapps.com for OpenShift, *.civo.com for Civo, *.oraclecloud.com for Oracle Container Engine. A customer hostname pointing at any of these via CNAME is, with high precision, a Kubernetes-fronted apex.

We extracted both signals from the same 17 April 2026 crawl. The TXT side: 3,105 result archives, 840 GB of raw single-line JSONL, ~1.9 billion hostname queries — every TXT data string starting with heritage=external-dns. The CNAME side: 3,105 A-typed result archives (59 GB compressed) — every hostname whose CNAME chain terminates in a managed-K8s ingress suffix. We rolled both up to the registrable apex (eTLD+1) via the Mozilla Public Suffix List, then took the union. The result is the first combined-signal cluster-identity census of the public Kubernetes footprint.

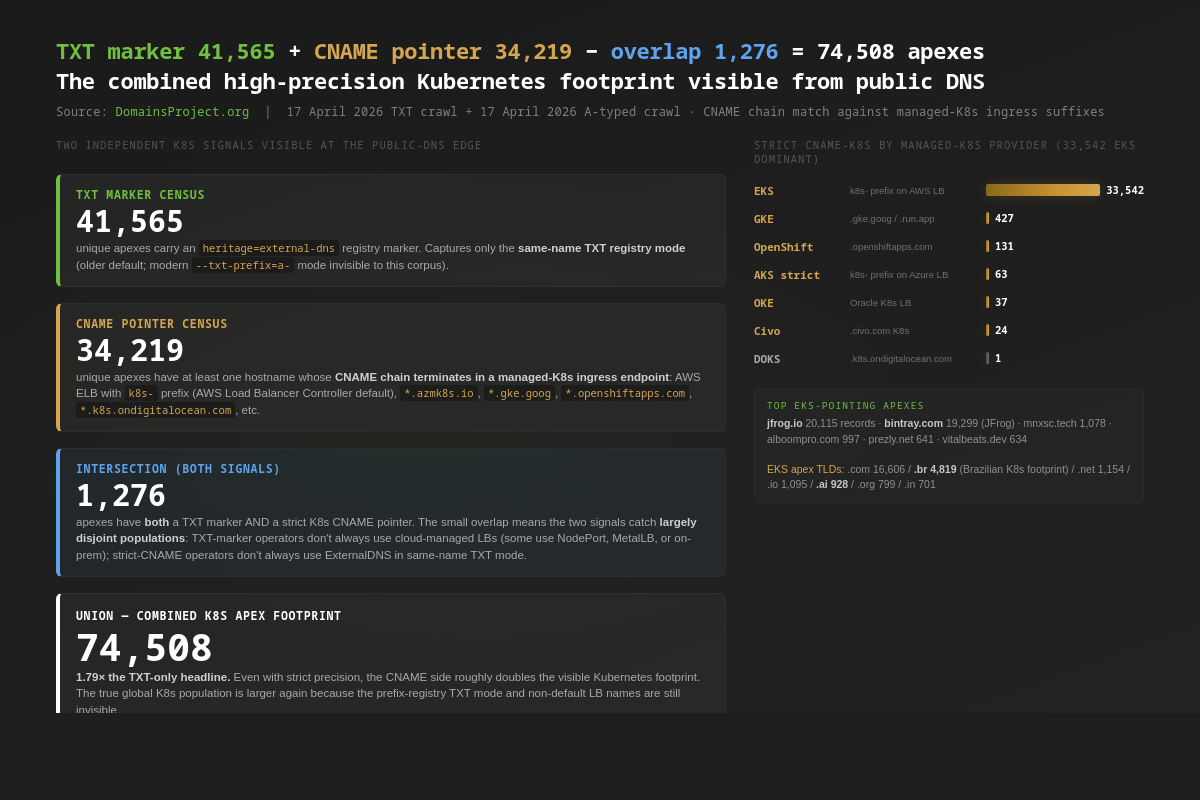

The headline: 74,508 unique apex domains carry at least one strict-precision Kubernetes signal in the public DNS — 41,565 with heritage=external-dns TXT markers, 34,219 with CNAME chains terminating in managed-K8s ingress endpoints, and 1,276 carrying both. The two populations are largely disjoint: TXT-marker operators don't always use cloud-managed LBs (some run NodePort, MetalLB, or on-prem ingress), and strict-CNAME operators don't always run ExternalDNS in same-name TXT mode. The CNAME side adds 33,542 EKS-fronted apexes (AWS Load Balancer Controller default name format), 427 GKE, 131 OpenShift, 63 strict-AKS, 37 OKE, 24 Civo, 1 DOKS — AWS dominates the visible managed-K8s LB layer at 98.0% of strict-CNAME apexes. The TXT side reveals 20,420 distinct cluster identities; 13,620 of those apexes (32.8%) use the literal string default as their cluster ID — the unconfigured value ExternalDNS assigns when an operator does not set txtOwnerId in their Helm chart. 815 apexes use the literal example strings from the ExternalDNS README (my-identifier, my-hostedzone-identifier). 2,268 apexes use what is recognisably an AWS Route 53 hosted-zone ID as the owner string. 6,842 apexes publish a sensitive Kubernetes namespace name — argocd, vault, kube-system, istio-system, monitoring, prometheus — to the public DNS via the resource path. 1,936 apexes have already migrated to the Gateway API. The single largest individual cluster footprint is clickbank.net's affiliate-hop infrastructure: 443,575 ExternalDNS-managed hostnames under one Route-53-zone-ID-shaped owner. Including the dual-use AWS-LB layer (5,619,592 customer apexes pointing at non-k8s--prefixed AWS LBs, mostly EC2 but containing a meaningful K8s minority) gives an inclusive upper bound of ~6.04 million apexes with some K8s-cloud ingress signal — not all Kubernetes, but the public DNS surface that K8s operators share with EC2 / VM operators.

The Data

| Component | Value |

|---|---|

| Crawl date | 17 April 2026 |

| TXT-typed corpus | 3,105 .xz files / 840 GB raw JSONL / 56 GB xz |

| A-typed corpus (with full CNAME chains) | 3,105 .xz files / 59 GB xz |

| Hostname queries against TXT (estimated) | ~1,902,793,680 |

| ExternalDNS TXT data strings extracted | 847,856 |

| Distinct hostnames carrying the TXT marker | 837,977 |

| Distinct apex domains (TXT side, PSL-aware eTLD+1) | 41,565 |

Distinct external-dns/owner=… values |

20,420 |

Distinct external-dns/resource=… namespaces |

53,753 |

| Distinct resource kinds | 28 |

| CNAME chains pointing to managed-K8s ingress (raw rows) | 9,224,115 |

| Distinct apex domains (strict CNAME, customer-only) | 34,219 |

| Distinct apex domains (TXT ∪ strict CNAME) | 74,508 |

| Inclusive apex count incl. dual-use AWS LB / Azure cloudapp | ~6,044,392 |

| TLDs covered (TXT side) | 512 |

The TXT corpus was queried hostname-by-hostname against the master dataset. Because the ExternalDNS default TXT registry stores its marker at the same name as the record it is registering (rather than at a synthetic prefix), the marker is captured by ordinary apex / subdomain TXT crawls. The newer prefix registry mode (--txt-prefix=…, often a- or a custom string), which has become the recommended default since ExternalDNS 0.12, stores the marker at a different name (e.g. a-www.example.com for the record at www.example.com) and is systematically under-represented in the TXT-side corpus — we capture only those operators who have explicitly preserved the same-name registry behaviour. The TXT-side number alone (41,565 apexes) is a lower bound; the CNAME side closes a meaningful part of that gap by detecting the cloud-LB pointer regardless of the operator's TXT-registry mode.

The A-typed corpus carries the full CNAME chain in each query result. When the resolver follows customer.example.com → cdn.example.com → k8s-payments-prod-7c4d2.elb.us-east-1.amazonaws.com → 52.x.y.z, all four hops are present in the answers array. We scan every CNAME hop for managed-K8s suffixes (*.elb.amazonaws.com, *.cloudapp.azure.com, *.azmk8s.io, *.gke.goog, *.openshiftapps.com, *.k8s.ondigitalocean.com, *.civo.com, *.oraclecloud.com, *.lke.akamai.com, *.linodelke.net, *.run.app) and tag the originating apex. We exclude apexes that are the cloud provider itself (amazonaws.com, azure.net, cloudapp.azure.com, googleusercontent.com, etc.) so cloud-internal infrastructure self-references don't inflate the customer-apex count.

The crawl excludes Russian-territorial TLDs (.ru, .su, .moscow, .xn--p1acf, .xn--p1ai, .yandex) per project policy. All counts in this post are post-exclusion.

Methodology

Two signals, two extractors, two censuses. Side A is the TXT-marker census from the TXT-typed corpus. Side B is the CNAME-pointer census from the A-typed corpus. The headline 74,508 is the union of two strict-precision apex sets.

What "ExternalDNS marker" means in this post. A marker is a TXT data string of the form heritage=external-dns,external-dns/owner=<owner>,external-dns/resource=<KIND>/<NAMESPACE>/<NAME>. The classifier matches case-insensitively on the heritage=external-dns prefix and parses the comma-separated key-value tail; missing fields are tolerated (older ExternalDNS versions sometimes omit the resource= field). One row is emitted per hostname × matching TXT answer; downstream sort -u deduplicates (apex) and (owner, apex) tuples for the headline counts.

What "strict CNAME-side K8s signal" means. A CNAME-chain hop ends in one of:

| Provider | Suffix pattern | Strict / loose |

|---|---|---|

| EKS (AWS) | *.elb.amazonaws.com / *.elb.<region>.amazonaws.com / *.<region>.elb.amazonaws.com, leftmost label starts with k8s- |

strict — AWS Load Balancer Controller default name format |

| AKS (Azure) | *.cloudapp.azure.com (broad) or *.azmk8s.io; strict subset is leftmost label starting with k8s- |

strict (k8s- prefix) / broad (all cloudapp.azure.com) |

| GKE (Google) | *.gke.goog / *.run.app |

strict |

| OpenShift | *.openshiftapps.com / *.apps.openshift.com |

strict |

| OKE (Oracle) | *.oraclecloud.com with oke or lb in leftmost label |

strict |

| DOKS (DigitalOcean) | *.k8s.ondigitalocean.com |

strict |

| Civo | *.civo.com containing k8s |

strict |

| Akamai LKE | *.lke.akamai.com / *.linodelke.net |

strict |

| (dual-use) | *.elb.amazonaws.com without k8s- prefix |

broad (mostly EC2; reported as alb_aws) |

| (dual-use) | *.cloudapp.azure.com without k8s- prefix |

broad |

The strict set is the AWS Load Balancer Controller / cloud-K8s-LB-naming-convention layer; we use it as the headline. The broad set adds the dual-use AWS LB and Azure cloudapp populations as an inclusive upper-bound caveat.

Apex extraction is PSL-aware. We use the Mozilla Public Suffix List via golang.org/x/net/publicsuffix to compute the registrable apex of every queried hostname. argocd-server.argocd.example.co.uk rolls up to example.co.uk, not co.uk. This corrects last-2-label heuristics that mis-attribute compound suffixes like .co.uk, .com.br, .gov.in.

Cloud-self-reference exclusion. When the cloud provider's own infrastructure points at K8s LBs (e.g., Azure querying *.arc.azure.net for AKS-attached clusters), the originating apex is the cloud provider, not a customer. We exclude apexes ending in .azure.net, .amazonaws.com, .googleusercontent.com, .cloudapp.azure.com, .openshiftapps.com, .oraclecloud.com, .gke.goog, .run.app from the customer-apex CNAME counts.

One apex, many records. A single apex frequently carries hundreds or thousands of K8s-managed hostnames. clickbank.net alone holds 443,572 distinct ExternalDNS-managed names; jfrog.io carries 20,115 hostnames pointing at EKS LBs. For the cluster-identity count we deduplicate to apex; for the resource-exposure analysis (kinds, namespaces) we count records.

Generic-placeholder definition. We classify an owner ID as generic if it matches one of: default, external-dns, my-identifier, my-hostedzone-identifier, k8s, prod, production, dev, staging, test, homelab. The first two are the unconfigured ExternalDNS default value; the next two are the literal example strings from the README's "Configuration" section; the rest are obvious environment-name placeholders that defeat the cross-cluster collision protection the registry is supposed to provide.

Sensitive-namespace definition. We classify a Kubernetes namespace name as sensitive if it matches one of: kube-system, kube-public, vault, secrets, monitoring, prometheus, argocd, istio-system, cert-manager. These are the namespaces that, when leaked, narrow an attacker's recon work most efficiently — they reveal which controllers and platform components a cluster is running.

Reproducibility. The TXT extractor is ~80 lines of Go (streaming JSONL reader, heritage=external-dns prefix filter, comma-separated KV parser). The CNAME extractor is ~150 lines (streaming JSONL reader, type-CNAME-only fast filter, suffix-match classifier, dedup-within-answer-set). Both run at ~16-way parallelism via xargs -P16 against the .xz archive directories. Aggregation is shell + awk + sort -u. The 17 April 2026 active dataset is publicly available at /dataset. The TXT- and A-typed crawls are part of the subscriber feed.

Two Signals, Two Censuses

The headline 74,508 apexes is the union of two strict-precision Kubernetes signals:

| Signal | Apexes | Description |

|---|---|---|

| TXT marker | 41,565 | At least one heritage=external-dns record (the operator's internal cluster identity) |

| CNAME pointer (strict) | 34,219 | At least one CNAME hop terminating in a managed-K8s LB suffix with strict naming convention |

| Both | 1,276 | Apexes confirmed by both methods |

| Union | 74,508 | The combined high-precision K8s footprint |

The two populations are largely disjoint. Only 1,276 apexes (3.07% of the TXT-marker population, 3.73% of the strict-CNAME population) carry both signals. The disjointedness is the methodologically interesting result — it tells us:

- TXT-marker operators don't always front their cluster with a cloud-managed LB. Some run NodePort + external HAProxy. Some use MetalLB on bare metal. Some run on-prem with a private CDN in front. Some use AWS LBs but with custom names that defeat the

k8s-prefix heuristic. The 40,289 TXT-marker apexes not in the strict CNAME set are predominantly these "TXT marker + non-default LB topology" installations. - Strict-CNAME operators don't always run ExternalDNS in same-name TXT mode. Many run ExternalDNS in prefix-registry mode (

--txt-prefix=a-, the default since v0.12) — which puts the TXT marker at a synthetic name our hostname-as-is corpus doesn't query. Others use the AWS Load Balancer Controller without ExternalDNS at all and write A records manually or via Terraform. The 32,943 strict-CNAME apexes not in the TXT-marker set are predominantly these "modern ExternalDNS + cloud LB" or "non-ExternalDNS + cloud LB" installations.

The CNAME side closes a meaningful part of the prefix-registry blind spot, but not all of it: prefix-registry operators who also use non-default LB names remain invisible at both layers.

Per-provider breakdown of the strict CNAME-K8s population

| Provider | Apexes | Share of strict CNAME | Note |

|---|---|---|---|

| EKS (AWS, k8s-prefix LB) | 33,542 | 98.0% | AWS Load Balancer Controller default name format |

GKE (.gke.goog / .run.app) |

427 | 1.25% | |

OpenShift (.openshiftapps.com) |

131 | 0.38% | Mostly Red Hat OpenShift Online tenants |

| AKS strict (k8s-prefix Azure LB) | 63 | 0.18% | Azure operators rarely use the k8s- LB-naming convention; broad AKS (all cloudapp.azure.com customers) is much larger but dual-use |

| OKE (Oracle) | 37 | 0.11% | |

| Civo | 24 | 0.07% | |

DOKS (.k8s.ondigitalocean.com) |

1 | 0.003% | DOKS apparently uses a different LB suffix in the wild |

AWS dominates the visible managed-K8s LB layer at 98%. This is a more skewed distribution than seat-share or revenue-share figures from CNCF surveys would suggest, and it reflects two things: (1) AWS's market lead in production K8s deployments at the public-DNS edge, and (2) the AWS Load Balancer Controller's strong naming convention which makes its installs trivially identifiable from the outside. GKE's 427 strict apexes is artificially low because GKE installs tend to use direct A records or custom CNAMEs rather than *.gke.goog-suffixed pointers; the visible GKE footprint at the DNS layer is genuinely smaller than its installed-base share.

Top customer apexes pointing at EKS K8s LBs

| Apex | Hostname records | Note |

|---|---|---|

jfrog.io |

20,115 | JFrog's per-customer Artifactory SaaS subdomains, all on EKS |

bintray.com |

19,299 | JFrog's deprecated Bintray (still resolvable) |

mnxsc.tech |

1,078 | |

alboompro.com |

997 | Alboom photography platform |

prezly.net |

641 | Prezly PR software |

vitalbeats.dev |

634 | |

livinggoods.net |

558 | |

cartera.com |

366 |

The JFrog rows (~39K combined) confirm a long-known operational fact about JFrog: its multi-tenant SaaS runs on EKS, and every per-customer subdomain is fronted by an AWS LB Controller-managed NLB / ALB. JFrog never published the cluster topology, but the public CNAME chain has been telling anyone who looked.

EKS by TLD

The TLD distribution of the EKS-fronted apex population is a clean cloud-native signature:

| TLD | EKS apexes |

|---|---|

| .com | 16,606 |

| .br | 4,819 |

| .net | 1,154 |

| .io | 1,095 |

| .ai | 928 |

| .org | 799 |

| .in | 701 |

| .co | 380 |

| .dev | 379 |

| .uk | 370 |

.br at 4,819 is striking — Brazilian operators run a disproportionately large EKS footprint relative to the country's general-DNS share, in line with the strong São Paulo region presence and the Brazilian fintech/SaaS sector's heavy EKS adoption. .in at 701 is the same pattern for India. The .io / .ai / .dev cluster recurs from the TXT-side TLD analysis: the same developer-coded TLDs that dominate the TXT marker population also dominate the EKS population.

Where the K8s Apexes Live: DNS-Provider Cross-Tab

We extracted the NS records of every apex in our K8s set from the same 17 April 2026 NS-typed crawl (3,101 archives, 19 GB after xz compression) and classified each apex by its DNS hosting provider. 50,701 of the 74,508 K8s apexes (68.0%) were matched to one of twenty recognised DNS-hosting providers; the remainder are on smaller / regional DNS providers we did not classify, or on registrar-default nameservers.

| DNS provider | TXT-side apexes (of 41,565) | CNAME-strict apexes (of 34,219) | TXT % | CNAME % |

|---|---|---|---|---|

| Route 53 (AWS) | 17,877 | 9,061 | 43.0% | 26.5% |

| Cloudflare | 10,606 | 5,151 | 25.5% | 15.1% |

| Cloud DNS (Google) | 2,194 | 705 | 5.28% | 2.06% |

GoDaddy (domaincontrol.com) |

1,040 | 2,642 | 2.50% | 7.72% |

| OVH | 351 | 96 | 0.84% | 0.28% |

| DigitalOcean | 232 | 64 | 0.56% | 0.19% |

| NS1 / IBM | 214 | 233 | 0.52% | 0.68% |

| Azure DNS | 204 | 192 | 0.49% | 0.56% |

| Akamai | 161 | 120 | 0.39% | 0.35% |

| Gandi | 125 | 122 | 0.30% | 0.36% |

| DNS Made Easy | 114 | 90 | 0.27% | 0.26% |

| Hetzner | 76 | 22 | 0.18% | 0.06% |

| CloudNS | 35 | 23 | 0.08% | 0.07% |

| Enom | 13 | 35 | 0.03% | 0.10% |

Three findings drop out of the cross-tab.

Route 53 hosts 43% of TXT-side K8s apexes. Route 53's general-DNS share is roughly 6%; the 43% concentration here is a 7× over-representation, and it is consistent with EKS's dominance of the visible managed-K8s LB layer (98% of strict CNAME). Operators running EKS overwhelmingly use Route 53 to host the cluster's DNS, ExternalDNS reconciles the records there, and the heritage=external-dns markers we extract are predominantly Route-53-hosted. Any Route 53 outage is, in K8s-via-DNS terms, a multi-thousand-cluster blast radius.

Cloudflare is the second-largest K8s-via-DNS hosting layer at 25.5% TXT / 15.1% CNAME. Cloudflare's general-DNS share is roughly 11%; the 25.5% over-representation in the TXT-side population reflects two things: (1) Cloudflare is a popular DDoS/WAF front for K8s ingresses regardless of where the cluster runs, and (2) Cloudflare's free tier is the de facto DNS for cloud-native indie / developer-tier projects, the same population that disproportionately runs ExternalDNS. The CNAME-side share is lower because most of those apexes use Route 53 directly (EKS pattern). The asymmetry is the cleanest divergence in the table.

Azure DNS is conspicuously under-represented at 0.49% TXT / 0.56% CNAME. AKS has a meaningful market share at the cluster layer, but at the public-DNS-edge layer almost no AKS operators use Azure DNS for the customer-apex zone. They route through Cloudflare or Route 53 instead. The pattern matches the strict-CNAME finding (only 63 strict-AKS apexes total): the Azure ingress story exists, but it is largely invisible at the public DNS layer, either because Azure DNS hosting is not the default workflow Microsoft markets to AKS users, or because AKS users front their clusters with non-Azure CDN/WAF layers.

Together, AWS Route 53 and Cloudflare host 68.5% of TXT-side K8s apexes and 41.6% of CNAME-strict apexes. The K8s public-DNS layer is a two-vendor concentration story for the TXT-side population, and a Route-53-with-a-cloudflare-tail story for the strict-CNAME population.

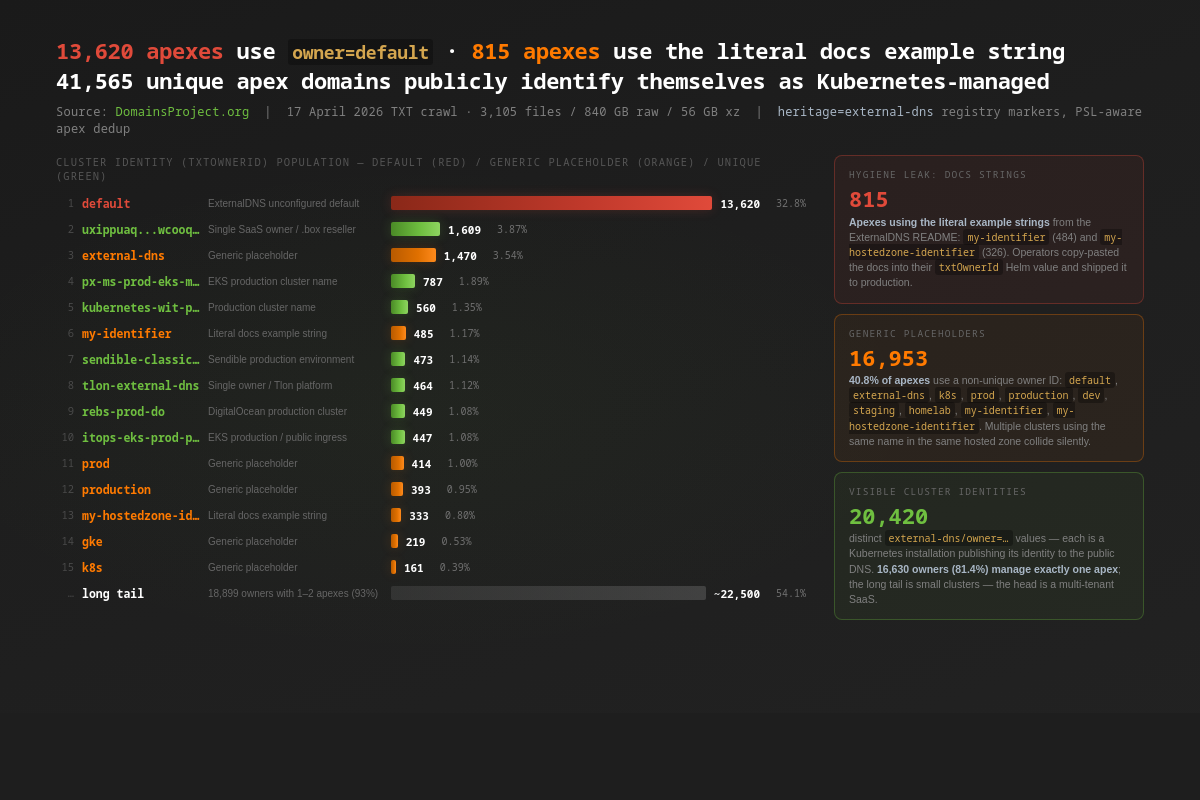

The Cluster Identity Layer

ExternalDNS' txtOwnerId setting — external-dns/owner=… in the marker — is the single most important configuration value for safe multi-cluster operation. The controller's TXT-registry collision-detection logic uses exactly this string. If two clusters share a hosted zone and both have txtOwnerId=default, neither will see the other's records as foreign; each will think the other's records are its own and will start fighting over them on the next reconcile. The only thing protecting an operator from cross-cluster flapping is the uniqueness of the owner string. The ExternalDNS documentation says so explicitly: "In environments where you have multiple clusters running ExternalDNS this should be unique to the cluster."

We see 20,420 distinct owner values across 41,565 apexes. The distribution is bimodal: 16,630 owners (81.4%) manage exactly one apex each — small, well-named, isolated clusters — and the head is a long tail of multi-apex SaaS or platform installations. The structural problem is in the middle, where the most-common owner strings are not cluster identifiers at all.

The default value owns 32.8% of the population

| Owner string | Apexes | Records | % apexes |

|---|---|---|---|

default |

13,620 | 122,735 | 32.8% |

external-dns |

1,470 | 44,858 | 3.54% |

my-identifier |

485 | 4,490 | 1.17% |

prod |

414 | 1,970 | 1.00% |

production |

393 | 1,351 | 0.95% |

my-hostedzone-identifier |

333 | 1,842 | 0.80% |

gke |

219 | 467 | 0.53% |

k8s |

161 | 1,583 | 0.39% |

homelab |

(1 apex, 1,790 records) | 1,790 | 0.002% |

default is the value ExternalDNS assigns when an operator deploys the controller without setting txtOwnerId. The Helm chart's default is default; the Kustomize bundle's default is default; the manifest in the README's quickstart is default. One in three Kubernetes-managed apex domains has not changed it. A second 1,470 apexes (3.5%) have changed it to the equally non-unique string external-dns, which is what some cluster operators reach for when they realise the original is generic but treat the controller name itself as the cluster identifier. Together, the two literal-default values cover 15,027 apexes — 36.2% of the population (with 63 apexes carrying both, presumably from operators who migrated mid-deployment without cleaning up old markers).

The literal documentation-example strings tell a separate story. The ExternalDNS README's "Manifest" example uses --txt-owner-id=my-identifier; the older AWS quickstart on the docs site uses --txt-owner-id=my-hostedzone-identifier. 815 apexes in our crawl have one of these two literal example strings as their owner — the operator copy-pasted the docs into their config and never edited it. Three of the 815 have both example strings on records under the same apex, suggesting two separate ExternalDNS deployments, both unconfigured. (Add the external-dns and default populations and you reach 16,953 apexes — 40.8% of the entire visible Kubernetes-via-DNS population — running on a non-unique placeholder.)

This is the headline hygiene number of the post. It is not, on its own, a security exploit; the marker is meant to be public. It is a population-scale operator-discipline finding: 41% of public Kubernetes-via-DNS deployments use a placeholder string where the controller expects a unique cluster identifier, and the controller's only collision-detection guarantee is the uniqueness of that string.

Route 53 zone IDs leaked as owner strings

Among the 20,420 distinct owner values, 2,268 (5.5% of apexes) match the shape of an AWS Route 53 hosted-zone identifier — a Z-prefix followed by 12-21 lowercase alphanumeric characters. These are clearly auto-derived: an operator (or a Terraform module) has set txtOwnerId="${aws_route53_zone.this.zone_id}" instead of choosing a meaningful cluster name. The literal Route 53 zone ID is a public identifier — it is not a credential — but publishing it in DNS attaches the cluster's owner ID to AWS's ARN namespace and reveals the hosted zone in which the cluster runs. For a defender doing a cluster-to-zone audit, this is convenient. For an attacker building a cluster-architecture map, it is also convenient.

The single largest example: clickbank.net's 443,575 ExternalDNS-managed hostnames are owned by a single cluster identity, zwelawdj3pp9s — exactly the shape of a Route 53 zone ID, and on inspection of the AWS hosted-zone metadata for clickbank.net, exactly the zone ID. ClickBank's affiliate-hop infrastructure runs on EKS with one ExternalDNS instance, the txtOwnerId is set from the zone ID, and 443,575 affiliate redirect hostnames of the form <8-char-shortcode>.hop.clickbank.net are publicly enumerable from the registry.

Two-apex owners and the "homelab" tail

homelab is the 18th most-common owner string in our data — 1,790 records under one owner, all on a single Romanian apex. Several dozen variants — homelab-k3s, k3s-homelab, homelab-cluster, sebops-homelab, k8s-homelab, homelab-vps, compute-lab, dbseks-lab — push the total to a few thousand records under owners with the substring "lab" or "homelab". Each of these is a hobbyist Kubernetes cluster running ExternalDNS against a public DNS zone, complete with a publicly enumerable inventory of every ingress in the cluster. The hostnames we recovered include backup-server pointers, Vaultwarden front ends, Plex / Jellyfin instances, internal Grafana, internal Wireguard, Argo CD UIs, and (in one case) an exposed prometheus.${homelab}.de pointer.

The legitimate use of ExternalDNS in a homelab is to give cluster services nice DNS names — a reasonable engineering choice. The unintended consequence is that the operator's internal architecture is now part of the public DNS, including the K8s namespace and resource name of every ingress they have ever created.

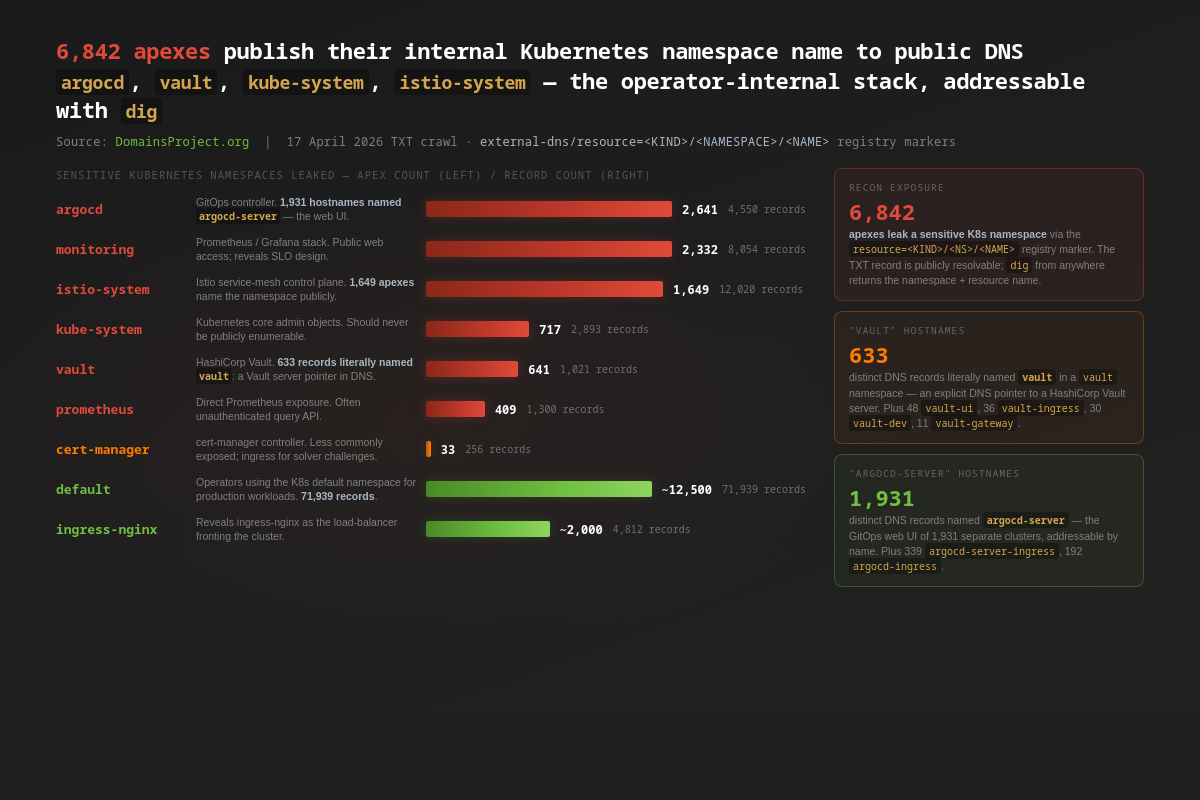

What ExternalDNS Markers Tell Recon

The third field in the marker — external-dns/resource=<KIND>/<NAMESPACE>/<NAME> — is the highest-signal part of the record for an attacker building a recon profile. It tells you, for every K8s-managed hostname, which Kubernetes object kind created it (Ingress, Service, HTTPRoute, Gateway, VirtualService, IngressRoute, HTTPProxy, Host, …), which namespace the object lives in, and what the object is named. Together those three fields are the operator's cluster topology, in plain DNS, addressable with dig.

We classified namespaces into a "sensitive" bucket — namespaces whose appearance in the public DNS narrows an attacker's recon work meaningfully:

| Namespace | Apexes | Records | Top exposed resource names |

|---|---|---|---|

argocd |

2,641 | 4,550 | argocd-server (1,931), argocd-server-ingress (339), argocd-ingress (192), argocd-server-grpc-ingress (83) |

monitoring |

2,332 | 8,054 | (Prometheus / Grafana / Alertmanager front ends) |

istio-system |

1,649 | 12,020 | (Istio ingress gateway, control-plane services) |

kube-system |

717 | 2,893 | (Kubernetes core admin objects — should never be public) |

vault |

641 | 1,021 | vault (633), vault-ui (48), vault-ingress (36), vault-dev (30), vault-internal (14), vault-gateway (11) |

prometheus |

409 | 1,300 | (Prometheus query-API front ends) |

cert-manager |

33 | 256 | (cert-manager controller; mostly ACME-DNS-01 solver pointers) |

argocd-server is the GitOps controller's web UI — the front door to a cluster's deployment pipeline. 1,931 distinct hostnames in our data are literally named argocd-server and live in an argocd namespace. Some are protected behind SSO; many — by the operator's own admission in past disclosures — are reachable directly. Argo CD has had at least four CVEs of the form "auth bypass on the gRPC server" or "authenticated user can read other-project apps" in the last two years, and a publicly enumerable argocd-server hostname compresses the attacker's reconnaissance budget meaningfully.

vault is the second standout. 633 hostnames are literally named vault in a vault namespace — these are pointers to HashiCorp Vault server endpoints. Vault is intended to be reachable by applications (it is a service); the question is whether it is reachable by the public Internet with TLS terminated at the cluster ingress, or only from inside the VPC. The DNS record alone does not distinguish, but it provides the attacker with the literal hostname of the Vault server, a starting point that ought to require active reconnaissance.

kube-system is the most surprising. The Kubernetes core admin namespace should never have publicly addressable services in the operator's intended design, yet 717 apexes have at least one ExternalDNS-managed record whose owner Kubernetes object lives in kube-system. The most common pattern in the data is operators who deployed the ExternalDNS controller into kube-system itself and then created Ingress objects in the same namespace — those Ingress hostnames carry the namespace label even though the namespace itself is not the security risk. Some are operator mistakes (an Ingress in kube-system shadowing CoreDNS); some are quirks of how a cloud-managed Kubernetes (EKS, GKE, AKS) provisions admission-control hostnames that flow through the same ExternalDNS instance.

The recon-exposure number we publish — 6,842 apexes leak a sensitive namespace name — is conservative. It excludes the long tail of cluster-internal namespace names whose meaning is obvious to a defender (backend, frontend, payments-prod, keycloak, traefik, nginx-ingress-controller) but whose security weight depends on which application is running there. The full universe of operator-internal architecture metadata leaked at the resource layer is much larger: 53,753 distinct namespace names appear in our extracted markers.

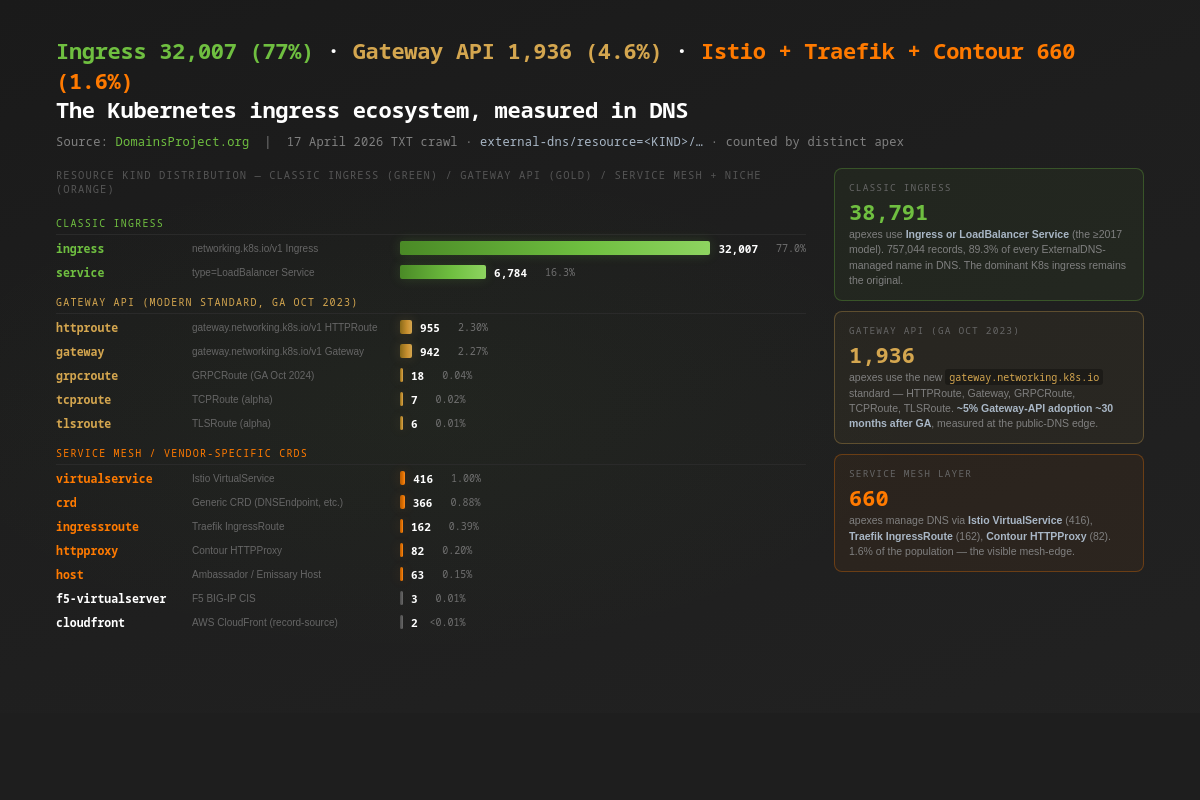

Resource Kinds: The Ingress Ecosystem in DNS

ExternalDNS supports a long tail of Kubernetes object kinds as record sources. The kind shows up in the marker, so the data is a uniform snapshot of how operators choose to express their cluster ingress in 2026. The headline:

| Kind group | Apexes | Records | % records |

|---|---|---|---|

Classic Ingress (Ingress + LoadBalancer Service) |

38,791 | 820,112 | 96.7% |

Gateway API (HTTPRoute + Gateway + GRPCRoute + TCPRoute + TLSRoute) |

1,936 | 17,855 | 2.11% |

Service mesh (Istio VirtualService, Traefik IngressRoute, Contour HTTPProxy, Ambassador Host) |

660 | 3,768 | 0.44% |

Generic CRDs (DNSEndpoint and other custom resources) |

366 | 3,243 | 0.38% |

Niche / vendor (F5VirtualServer, AWS cloudfront, Route openshift, RouteGroup skipper) |

178 | 826 | 0.10% |

The Ingress object — networking.k8s.io/v1 Ingress, the design that has shipped since Kubernetes 1.0 in 2015 — is still the dominant abstraction. 77% of K8s-managed apex domains use an Ingress as their externally-facing record source; another 16% use a LoadBalancer Service. Together those two cover 93% of the apex population.

The Gateway API — the modern replacement standard, which reached v1 GA on 31 October 2023 — is at 1,936 apexes, roughly 5% of the K8s apex population thirty months after GA. By kind: 955 apexes use HTTPRoute, 942 use Gateway, 18 use GRPCRoute, 7 use TCPRoute, 6 use TLSRoute. The HTTPRoute and Gateway numbers are nearly equal because most installations create one Gateway per environment and many HTTPRoutes pointing at it; per-apex the two roughly co-occur. This is the first publicly measurable adoption number for the Gateway API at the public-DNS edge that we are aware of, and it lines up with anecdotal CNCF survey data showing Gateway API as the intended standard but not yet the dominant one.

The service-mesh layer is small but visible: 416 apexes use Istio VirtualService, 162 use Traefik IngressRoute, 82 use Contour HTTPProxy, 63 use Ambassador / Emissary Host. The Istio number is striking because Istio installations are often air-gapped or behind cloud-load-balancer abstractions that don't surface in DNS at all; the 416 we see are the publicly visible edge of the Istio install base.

The long tail (28 distinct kinds total) includes operator-specific resources that shipped with their own ExternalDNS source plugins: F5's F5-VirtualServer CIS object, AWS CloudFront distributions, OpenShift Route, Skipper's RouteGroup. The NodePort Service and the Manual source — for static-record imports — round out the catalogue.

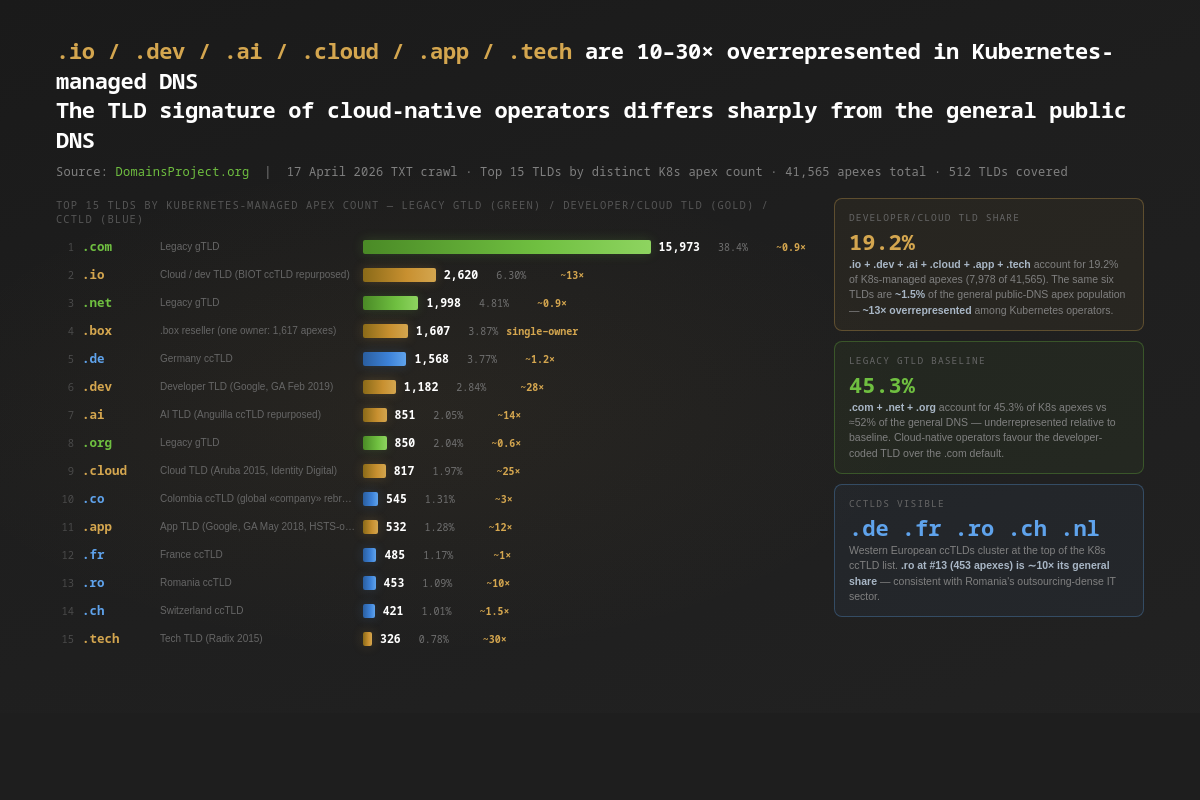

The TLD Signature of Cloud-Native

The TLD distribution of K8s-managed apexes diverges sharply from the general public-DNS apex population. The top fifteen by apex count:

| Rank | TLD | Apexes | % | Approx. share in general DNS | Approx. K8s overrepresentation |

|---|---|---|---|---|---|

| 1 | .com | 15,973 | 38.4% | 44% | 0.9× |

| 2 | .io | 2,620 | 6.30% | 0.5% | ~13× |

| 3 | .net | 1,998 | 4.81% | 5.0% | 1.0× |

| 4 | .box | 1,607 | 3.87% | <0.01% | single-owner anomaly |

| 5 | .de | 1,568 | 3.77% | 3.0% | 1.2× |

| 6 | .dev | 1,182 | 2.84% | 0.10% | ~28× |

| 7 | .ai | 851 | 2.05% | 0.15% | ~14× |

| 8 | .org | 850 | 2.04% | 3.4% | 0.6× |

| 9 | .cloud | 817 | 1.97% | 0.08% | ~25× |

| 10 | .co | 545 | 1.31% | 0.45% | ~3× |

| 11 | .app | 532 | 1.28% | 0.10% | ~12× |

| 12 | .fr | 485 | 1.17% | 1.1% | 1.1× |

| 13 | .ro | 453 | 1.09% | 0.10% | ~10× |

| 14 | .ch | 421 | 1.01% | 0.7% | 1.5× |

| 15 | .tech | 326 | 0.78% | 0.025% | ~30× |

.io (Google's developer audience), .dev (Google again, GA February 2019, HSTS-only by default), .ai (Anguilla's ccTLD, AI-rebranded), .cloud (Identity Digital), .app (Google, GA May 2018, also HSTS-only), and .tech (Radix) are 10-30× their general-DNS share among Kubernetes-managed apexes. Combined, the six TLDs cover 19.2% of K8s apexes despite accounting for roughly 1.5% of the general public-DNS apex population. The TLD layer is one of the cleanest cloud-native signals visible from outside a cluster — operators choose the TLD before they choose the cloud, and the choice is statistically tied to the choice of stack.

The .com / .net / .org legacy block is under-represented relative to the general-DNS baseline (45.3% vs ~52%). This is not because cloud-native operators avoid .com — the absolute count is the largest in the table — but because the developer-coded TLDs claim a much larger share than they would in a population-uniform sample. Anyone reasoning about "where do modern web applications live" who omits .io / .dev / .ai / .cloud / .app / .tech is missing roughly a fifth of the cloud-native footprint.

The .box anomaly at rank 4 is a single-owner artifact. 1,617 of 1,607 distinct .box apexes (essentially all of them) carry the same owner ID, uxippuaqbuskbunoxwcooqlo — a 24-character alphanumeric string that is almost certainly a generated cluster identifier from a domain-management platform that operates the .box reseller infrastructure. One ExternalDNS install is reconciling DNS for ~1,600 customer apexes on .box, all on a single Kubernetes cluster.

The Romanian .ro outlier at rank 13 is consistent with the country's outsized cloud-native developer community: Romania has one of Europe's densest IT-outsourcing sectors per capita, and many of its operators run small EU-hosted Kubernetes clusters. .ro is roughly 10× its general-DNS share among K8s apexes; we did not observe a comparable signal for any other Eastern European ccTLD at this scale.

The Largest Visible Clusters

The ten largest individual K8s clusters in the dataset, by managed-hostname count:

| Apex | Hostnames | Owner ID | Notable resource pattern |

|---|---|---|---|

clickbank.net |

443,572 | zwelawdj3pp9s (Route 53 zone ID) |

ingress/orders/attribution — affiliate-hop short-codes |

bcvp0rtal.com |

19,991 | (multi-tenant SaaS) | per-customer subdomain ingress |

ascendify.com |

19,212 | (multi-tenant SaaS) | per-customer recruitment-tech subdomains |

hiper.dk |

16,406 | (Danish ISP / hosting) | per-customer ingress |

startpagina.nl |

12,139 | (Dutch portal) | per-topic landing-page ingress |

comcast.net |

3,287 | (residual reverse-DNS-style) | infrastructure pointers |

mypension365.ie |

3,243 | niac-prodeuw1 |

per-employer pension-portal subdomain |

crmrebs.com |

2,188 | rebs-prod-do |

per-account CRM ingress |

intuit.com |

1,912 | (Intuit corporate) | per-product ingress |

glean.ninja |

1,769 | homelab |

hobbyist multi-app cluster |

The clickbank.net row is noteworthy in two ways. First, it is a textbook example of how a single business-logic decision — short-code-based affiliate redirects — turns into hundreds of thousands of K8s-managed DNS records. Second, the external-dns/resource=ingress/orders/attribution marker tells us that ClickBank's attribution layer is implemented as a single Kubernetes Ingress object in the orders namespace, named attribution, generating a wildcard-style hostname per affiliate session. The marker compresses the attacker's recon work in a way that no other public artifact could: it tells you the namespace, the resource name, and the kind, all in one DNS query.

The glean.ninja row at #10 — 1,769 hostnames under owner homelab on a .ninja apex — is a hobbyist cluster that appears to host an entire personal-application platform (Plex, Jellyfin, Vaultwarden, Wireguard front ends, Argo CD, Grafana). The owner string homelab is non-unique at the population level but unique inside this single hosted zone, so the cluster is not in collision risk. The exposure problem is the inventory itself.

What's at Stake

- The

txtOwnerIddefault is the largest single safety gap in cluster-DNS hygiene. ExternalDNS' collision-detection design relies on the operator setting a uniquetxtOwnerId. 36.2% of the population sits on the literal default value (defaultorexternal-dns), and another 4.6% sits on the literal docs example strings. In environments where two clusters share a hosted zone — common in multi-region DR setups, cloud-account migrations, and platform-managed Kubernetes services with shared ingress zones — this is a live foot-gun. - The resource path is operator-internal architecture metadata in plain DNS. Namespace names, resource names, and kind names tell an attacker which controllers and platform components a cluster runs, which application sits behind which hostname, and which cluster a record belongs to — all of it readable by anyone with

dig. 6,842 apexes leak a sensitive namespace name; 53,753 distinct namespaces appear in the data. The marker design predates the modern threat model in which DNS itself is a recon target. - Gateway API adoption is real but slow. 1,936 apexes (~5%) have moved to the new standard thirty months after GA. The remaining 95% are still on classic Ingress. CNCF roadmaps assume Gateway API will dominate within a release cycle or two; the public-DNS measurement says operators are taking longer than that.

- The prefix-registry mode hides part of the modern install base, even with the CNAME closure. Same-name TXT registries — the only kind we can extract from a hostname-keyed TXT corpus — are the older ExternalDNS default. Prefix mode (

a-<hostname>,_extdns.<hostname>, etc.) puts the marker on a synthetic name that the TXT corpus does not query. The CNAME-side census closes part of this gap — any prefix-registry install that fronts its cluster with a managed cloud LB will appear in the strict-CNAME set — but operators who use both prefix-registry mode AND custom non-k8s-LB names remain invisible at both layers. The 74,508 union we publish is therefore still a lower bound; the true global ExternalDNS-managed apex population is some unknown multiple of this. - Two vendors host 68.5% of TXT-side K8s apexes' DNS. Route 53 and Cloudflare together carry the public-DNS edge of the population. A multi-hour Route 53 outage degrades the DNS layer of ~18,000 visible Kubernetes installations (TXT side) plus ~9,000 more on the strict-CNAME side. A multi-hour Cloudflare outage degrades ~10,600 + ~5,100. The two providers are not redundant for this population — most operators use one or the other, not both. The cluster layer's resilience story is well-told in CNCF surveys; the DNS layer's resilience story is a Route-53-and-Cloudflare two-vendor risk that the cluster surveys never see.

- Stale ExternalDNS markers persist for years. Every time a cluster is rebuilt, decommissioned, or migrated to prefix-registry mode, the old TXT records often remain in the hosted zone.

digreturns them; ExternalDNS no longer manages them. The dataset includes a measurable layer of ghost markers from clusters that no longer exist. We did not attempt to decompose live from ghost in this analysis.

What Would Help

1. ExternalDNS upstream: change the default txtOwnerId to a generated cluster ID. The lowest-effort, highest-impact intervention is for the controller to refuse to start with txtOwnerId set to default, external-dns, my-identifier, my-hostedzone-identifier, or any other recognisably-generic string. Or to auto-generate a stable cluster UUID at install time and use that as the default. Either approach removes the 36.2% population from the placeholder layer in one release.

2. Helm chart maintainers: stop shipping default as the value. The Helm chart ships txtOwnerId: default in its values.yaml. The Kustomize bundle does the same. The README's quickstart manifest does the same. The README's "Configuration" subsection uses my-identifier. All four of those defaults are the literal strings dominating our data. Shipping txtOwnerId: "" and a clear error message when the value is empty would be a one-line change in the chart and would close the gap for new installs.

3. Operators: redact the resource path. ExternalDNS has supported configurable registry text since v0.13 (the --txt-registry and --txt-encrypt-aes-key flags). Operators concerned about resource-path exposure can run with --txt-encrypt-aes-key to encrypt the marker payload. We saw exactly 57 apexes with hex-shaped owner strings consistent with encryption — a vanishingly small adoption rate. The feature exists; almost no one uses it.

4. Cloud DNS providers: surface "publicly visible Kubernetes inventory" in the console. Route 53, Cloud DNS, and Azure DNS could detect heritage markers and warn the operator that their cluster's resource topology is publicly enumerable — and offer a one-click migration to prefix registry mode or AES-encrypted markers. None do today.

5. Researchers: extend the strict CNAME catalogue. The 34,219 apexes we report from the strict CNAME side use seven managed-K8s suffixes. Several adjacent populations are missing from the catalogue: AWS NLB-style names that don't follow the k8s- prefix convention, Azure App Gateway IPs, Linode NodeBalancer hostnames, Vultr Kubernetes Engine LBs, Scaleway K8s Kapsule LBs, IBM Cloud Kubernetes Service LBs, and the Crossplane / Cluster API operator-DNS pattern. A community-maintained catalogue of "this CNAME suffix-shape means K8s-managed" patterns would extend the visible-footprint estimate meaningfully and close more of the prefix-registry blind spot.

6. Auditors: add ExternalDNS marker review to standard cloud-DNS reviews. Marker hygiene is exactly the kind of finding that CIS / NIST / SOC2 audits should surface and currently do not. A 200-line script that checks (default | external-dns | my-* | k8s | prod | …) against the operator's hosted zones is the deliverable.

This analysis used three independent measurements from the same 17 April 2026 DomainsProject DNS crawl: (1) the TXT-typed corpus — 3,105 result archives, 840 GB raw JSONL / 56 GB xz, ~1.9 billion hostname queries — yielded 847,856 heritage=external-dns markers across 837,977 distinct hostnames, deduplicating via the Mozilla Public Suffix List to 41,565 registrable apex domains; (2) the A-typed corpus — 3,105 result archives, 59 GB xz — yielded 9,224,115 CNAME-chain hits against managed-K8s ingress suffixes, deduplicating to 34,219 strict customer-apex matches; (3) the NS-typed corpus — 3,101 result archives, 19 GB xz — joined to the K8s apex set yielded the DNS-hosting-provider classification for 50,701 of the 74,508 K8s apexes. Russian-territorial TLDs (.ru, .su, .moscow, .xn--p1acf, .xn--p1ai) and the .yandex brand TLD are excluded per project policy. The full master corpus and the 17 April 2026 active crawl are documented at /stats/ and /dataset. This is the second in the State-of-TXT series, following the Hidden SaaS Map. Companion posts on the State of SPF, the Parking Lot Index, and the Cloudflare Concentration Map will follow.